End AI Screen

The End of the Screen: Why 2026 Is the Year AI Grew Legs and Wheels

For decades, technology lived inside screens.

Computers, smartphones, tablets, dashboards, applications. Everything happened there: pixels organized into interfaces that represented the digital world.

But something has started to change.

In 2026, artificial intelligence is leaving the screens and entering the physical world. Robots are walking through warehouses, cars are beginning to drive themselves in controlled environments, and computer vision systems interpret the world around us in real time.

We are entering the era of Physical AI.

The Revenge of the Physical World

For a long time, software dominated everything.

Billion-dollar companies were built without physical assets: social networks, marketplaces, SaaS, digital platforms. The rule was clear: the fewer atoms and the more bits, the better.

But now we are seeing a reversal.

Artificial intelligence has evolved to the point where it can interpret the physical world with enough precision to act within it.

This opens the door to a new kind of technology: systems that think and also execute in the real world.

Not just software.

Machines.

Robots That Actually Work

For years, robots were associated with automotive factories.

Large mechanical arms, extremely precise, but also extremely limited. They only worked in highly controlled environments.

Today, this is changing.

Modern robots use AI, sensors, and computer vision to understand complex environments.

They can:

- identify objects

- sort products

- transport loads

- work alongside humans

Logistics centers are becoming true hybrid ecosystems of humans and machines.

Instead of replacing people, many of these systems increase the productivity of human teams.

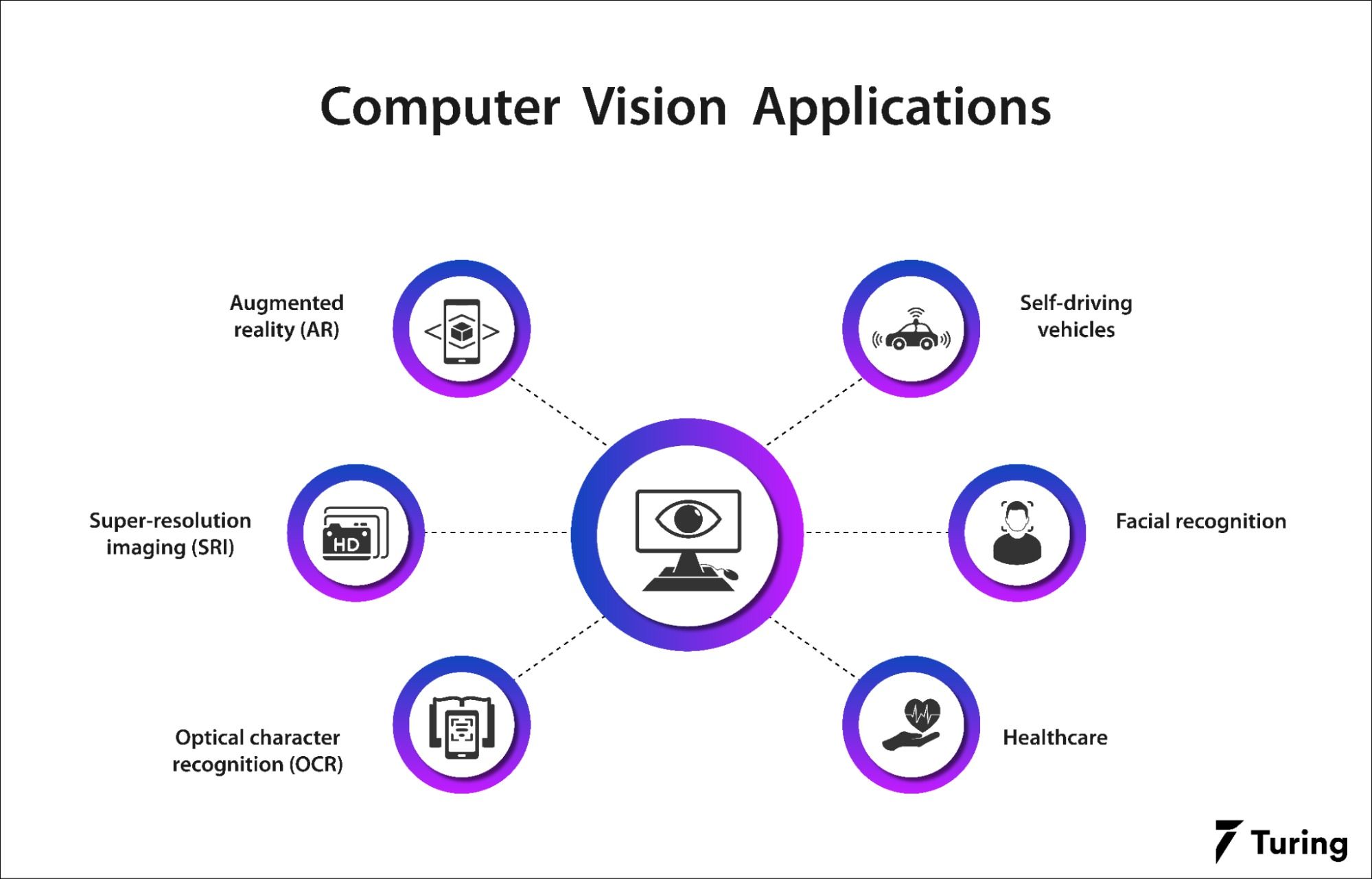

Computer Vision as the Bridge

If there is a silent technology behind this revolution, it is computer vision.

For a robot to act in the physical world, it must first understand what it is seeing.

This involves:

- recognizing movement

- identifying objects

- interpreting dynamic environments

- identifying objects

In practice, this means transforming images from the real world into structured data.

And when machines can interpret images with enough precision, something powerful happens:

The physical world becomes programmable.

Vehicles That Are Beginning to Drive Themselves

Another clear sign of this shift is autonomous vehicles.

After years of exaggerated promises, the industry finally seems to be finding a more realistic path.

Instead of immediate full autonomy, we are seeing the gradual adoption of intermediate levels of automation, such as Level 3.

In this model:

- the car drives itself under certain conditions

- the driver can still take control when necessary

- sensor systems and AI continuously monitor the environment

It is not the fully autonomous future many imagined in 1985 in Back to the Future.

But it is a concrete and functional step.

In other words, your co-pilot never rests!

The Next Wave of Startups

If the last decade was dominated by software startups, the next one may be dominated by startups that combine software, hardware, and AI.

Areas with enormous potential include:

- logistics robotics

- industrial automation

- automated inspection

- smart agriculture

- autonomous mobility

These companies are harder to build. They involve mechanical engineering, sensors, physical production, and complex integration.

But they also have much higher barriers to entry.

The World Is Becoming Programmable

Perhaps the deepest shift is this:

We are beginning to program the physical world, not just computers.

When sensors, AI, and robots connect, objects stop being passive things and start participating in intelligent systems.

Warehouses organize themselves.

Cars interpret traffic.

Machines make decisions in real time.

Technology is finally leaving the screens—and when AI gains legs and wheels, the impact is not only digital.

It happens in the real world.